The following are highlights of some of the recent research that has been conducted by our students.

Cybersecurity

Verification of Quantitative Properties Supporting Mutable Arrays

Software systems often need to handle sensitive data securely, maintain user privacy, and operate efficiently. One way to ensure these qualities is by analyzing how a program behaves when it processes different inputs or runs in different situations. This type of analysis, called relational reasoning, helps uncover important properties like whether a program protects sensitive information or performs tasks consistently. While tools exist for analyzing some programs, they often struggle to handle features like mutable arrays, which are widely used to store and manage data in practical applications. The project’s novelties are creating better tools to analyze programs that use arrays, making the process more precise and broadly applicable. By addressing key challenges in existing techniques, the research aims to bridge gaps in both theoretical understanding and practical implementation.

- Develop a program logic which is able to reason about programs with mutable arrays and implements the related automation tools such as type checker, proof systems. This project is related to the grant supported by NSF 2451384.

- Design the syntax of a programming language that supports mutable array operations, design the semantics of the language and prove the theoretical soundness of the language. Design a logic to reason about general properties of such programs

Gamified Learning

Spoofing involves disguising an email address, phone number, voice, video, website or other form of communication to impersonate someone else. Phishing technically involves multiple of these as part of a scheme to gain the trust of a target through impersonation. In the context of email spoofing, the goal is typically to redirect users to a spoofed website. These attacks have become more sophisticated, and can lead to serious consequences, such as discord, false incrimination, and the theft of login credentials if misused. With the rise of free AI tools, it is expected to see increased spoofing. This study aims to raise student/faculty awareness at Monmouth, provide education on how these various impersonation spoofs can occur, and how to detect them.

This video was created by Graduate student, Gurmeet Singh and contains false messages from seemingly famous individuals: Don’t Be Fooled by Deep Fakes

Through a grant funded by The National Science Foundation, students have created a gamified learning site, designed to educate users about the various ways communications can be spoofed:

Integrated Systems

ParkShark

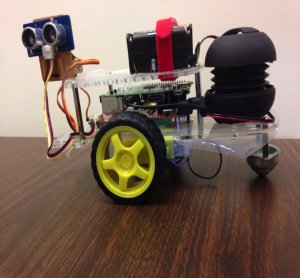

CSSE department students Davian Albaran and Drew McGovern designed and developed an innovative combination of IoT (Internet of Things) hardware and software service to passively assist commuting students to find available parking spots.

The following video contains Omar Ahmed and Andrew McGovern’s Hawk Talk presentation of all the capabilities of Parkshark.

Navigating campus parking is a daily challenge for students, faculty, and administrators alike. ParkShark is an innovative end-to-end solution that transforms the campus parking experience through advanced technology, automation, and real-time data analysis. Our system eliminates traditional parking permits by introducing digital ParkShark Tags that communicate via Bluetooth Low Energy (BLE) with our mobile app, seamlessly tracking vehicle locations and providing real-time parking availability for all parking spots on campus.

For everyday users, the ParkShark iPhone and Android applications deliver intuitive interfaces for locating available parking spots in their preferred lots at their preferred arrival times, as well as receiving live notifications about spot closures and campus announcements. The everyday user simply places their assigned ParkShark Tag anywhere on their car’s dashboard, similar to an EZ-Pass device, and everything is handled automatically; the ParkShark Tag seamlessly communicates with the mobile app to report the parked vehicle to the server, allowing for a completely hands-free experience and eliminating the hassle of manual check-ins.

For campus police and parking enforcement, the ParkShark Patrol iPad app streamlines enforcement of campus parking policies, ticketing of policy-violating vehicles, and real-time spot monitoring. The ParkShark Admin web portal provides the police headquarters with powerful oversight tools that allow the police department to efficiently manage lot closures, campus alerts, ParkShark Tag assignment and replacement, and ticket statuses.

Behind the scenes, ParkShark Intelligence powers the system with a robust database and API which stores and analyzes all incoming and outgoing data, and a differential GPS station that corrects reported GPS data down to the centimeter. This smart backend service ensures unparalleled accuracy in spot tracking and information transfer.

ParkShark is an integrated cutting-edge software-hardware solution to revolutionize campus parking, providing a more intelligent, efficient, and user-friendly experience for all. Join us to explore the research, development, testing, and innovation that drives this transformative system, setting a new standard for smart campus infrastructure.

Mobile Application Development

Fludz

Fludz is a crowd-sourced flood data distribution and analysis service that allows users to report and view local real-time data about flooding conditions. The service came to fruition from Ava Taylor’s (’23) Honor’s Thesis research on the lack of flood data at a local level and finding a cost-effective way to report and track flooding. The app received a non-provisional US patent (18/635,475) and trademark in April 2025.

Marco Ocean Maps

The following video demonstrates a mobile app version of the Mid-Atlantic Ocean Data Portal that will allow Android and iPhone users to search and view thousands of maps showing natural features and human activities at sea. The app was developed for CS-492 – Senior Project.

Artificial Intelligence, Medtech

Predicting Patient Length of Stay in Hospitals Using Machine Learning

The primary mission of hospitals is to meet the demand for care by efficiently moving patients through the care pathway while simultaneously improving patient satisfaction and health outcomes. One of the crucial determinants for hospitals to maintain resource efficiency and deliver quality treatment is the patient length of stay (LOS), as it directly impacts bed availability, staffing requirements, and overall operational costs. Reducing LOS without compromising patient care is a significant challenge that hospitals face.

The goal of this project is to develop a machine learning system to predict LOS at the admission phase of patients, using initial diagnosis and test results. By accurately predicting LOS, hospitals can better allocate resources, optimize patient flow, and improve overall patient care. The emphasis of this research is on feature engineering, one-hot encoding, and Synthetic Minority Over-sampling Technique (SMOTE) to handle imbalanced datasets. Feature engineering involves selecting and transforming relevant variables to enhance the predictive power of the model. One-hot encoding is used to convert categorical variables into a format that can be provided to machine learning algorithms. SMOTE is employed to address the issue of imbalanced datasets, which is common in real-world healthcare data. Both regression models and classification models, including deep learning and some most recently developed algorithms such as federated learning, will be investigated, implemented, and tested in this study. Regression models will predict the exact LOS, while classification models will categorize patients into different LOS ranges. Real-world datasets will be used for model training and validation to ensure the robustness and generalizability of the developed models. The results of the different models will be compared and discussed to identify the most effective approach for predicting LOS. Given that most real-world datasets are significantly imbalanced, the imbalanced nature of the dataset will be thoroughly discussed and addressed to improve model performance and reliability.

Sentinel LLM

Utilizing LLMs to develop chatbots to better assist medical professionals in diagnosing critical medical conditions avoiding organ dysfunction while avoiding human error and protocol ambiguity. Large language models (LLMs) represent frontier neural network techniques that use self-supervised learning algorithms to process and understand human languages or text. This project aims to develop an interactive clinical chatbot fine-tuned on sepsis specific knowledge. Built on compact, decoder-only transformer models like Phi-2 or Mistral-7B, and hosted in a lightweight Gradio interface, this tool will be designed to assist with real-time clinical queries. Students will complete the end-to-end machine learning pipeline—from data collection to fine-tuning, testing, and deployment—with a strong emphasis on safety, groundedness, and clinical relevance.

Artificial Intelligence, Robotics

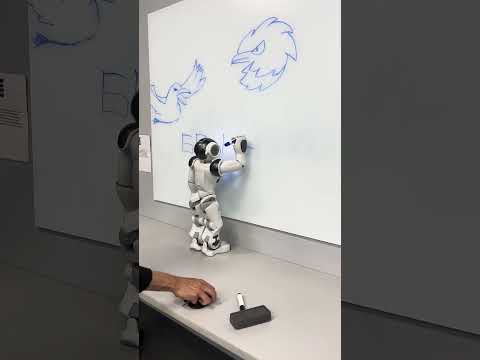

NAO Robot

NAO is an autonomous and programmable robot with a long list of possible functions that involve topics such as human interaction, object sensing, and humanoid movements. NAO can be programmed by a user using the Python programming language, and the Choreograph desktop application.

Real-Time Control

The following video shows SE-641 (Real-Time Robot Control) students’ final project involving robotic control using NAO. This project demonstrates a multi-faceted task involving 1) grab and carry objects 2) fine motor actions, and 3) object detection and recognition.

The following video shows SE-641 (Real-Time Robot Control) final projects involving real-time robotic control using NAO. The projects demonstrated are: 1) robot calculator, 2) grab and carry objects, and 3) play rock-paper-scissors.

Dialogue and Face Recognition

In the following project, students have programmed NAO to demonstrate several ways in which the robot can converse with humans, and how the robot can adapt its conversations based on a face it recognizes. This project sets up a strong base for students to build on to explore more complex problems with NAO.

Deep Learning-Based Hand Gesture Recognition and Drone Flight Controls

In this master’s degree thesis project a hand gesture recognition system is designed and developed for the control of flights of unmanned aerial vehicles (UAV). To train the system to recognize designed gestures, skeleton data collected from a Leap Motion Controller are converted to two different data models. As many as 9,124 samples of training dataset, 1,938 samples of testing dataset are created to train and test the proposed three deep learning neural networks, which are a 2-layer fully connected neural network, a 5-layer fully connected neural network and an 8- layer convolutional neural network. The static testing results show that the 2-layer fully connected neural network achieves an average accuracy of 98.2% on normalized datasets and 11% on raw datasets. The 5-layer fully connected neural network achieves an average accuracy of 95.2% on normalized datasets and 45% on raw datasets. The 8-layers convolutional neural network achieves an average accuracy of 96.2% on normalized datasets and raw datasets. Testing on a drone-kit simulator and a real drone shows that this system is feasible for drone flight controls.

Motion Control of Robots Using Speech

This is a graduate class project. Students were asked to design and develop a real-time application in the Linux environment to control the motion of two robots. The motion of the robots are purely controlled by speech, and thus a speech recognition system is also developed to produce control commands.

A Robot to Improve Verbal Communication Skills of Children with Autism

(In collaboration with Dr. Patrizia Bonaventura of the Speech Pathology Department)

Children diagnosed with autism typically display difficulty in the areas of social interaction and verbal communication. Various studies suggest that children with autism respond better to robots than to humans. Another reason is the special interest of autistic children in electronic and mechanic devices. We have been experimenting with various types of robots. In this project, we are seeking answers to the following questions: Can an interactive robot help children with autism to respond in interpersonal communication? Does the children’s interaction with a human differ from the interaction with a robot? How does the form of the robot affect the children’s interaction with it?

Data Science, Natural Language Processing, Image Processing, Low Code & No Code

Mock AI Audit for Fair and Transparent Insurance Cost Prediction using No Code, Low Code

This project explores the development and auditing of an AI-based insurance pricing model through a two-phase approach. In Phase 1, a model was created using Orange, simulating how an insurance company might determine policy prices based on customer data. Phase 2 audited this model for fairness and transparency using SHAP, and fairness widgets. This work is grounded in the broader context of ethical AI use in real-world systems. Regulatory frameworks we considered, such as New York City’s Automated Decision Systems Law (AEDT Law) and Colorado’s AI Insurance Pricing Regulation, both of which emphasize accountability, transparency, and nondiscrimination in algorithmic decision-making. The methodology also aligns with the principles of AI ethics, including fairness, explainability, accountability, and the right to meaningful oversight. By combining technical modeling with ethical auditing, the goal was to simulate how AI in insurance can be both effective and responsible.

Informed and Timely Business Decisions – A Data-driven Approach

One of the main characteristics of business rules (BRs) is their propensity for frequent change, due to internal or external factors to an enterprise. As these rules change, their immediate dissemination across people and systems in an enterprise becomes vital. The delay in dissemination can adversely impact the reputation of the enterprise, and cause significant loss of revenue. The current BRMS are often maintained by the IT group within a company, therefore the modifications of the BRs intended by executive management would not be instantaneous, since they have to be coded and tested before being deployed. Moreover, the executives might not have the possibility to take the best decisions, without having the benefit of analyzing historical data, and the possibility of quickly simulating what-if scenarios to visualize the effects of a set of rules on the business. Some of the systems that provide this functionality are prohibitively expensive. This project proposes to solve these challenges by using the power of Big Data analysis to source, clean and analyze historical data that is used for mining business rules, which can be visualized, tested on what-if scenarios, and immediately deployed without the intervention of the IT group. The proposed approach is using open source components to mine stop loss rules for financial systems.

Touristic Sites Recommendation System

Recommending touristic places for visitors is an important service of touristic websites. Most websites allow visitors to either numerically rate or leave a review about the site they visited to express their opinion. Those reviews help future visitors to choose the place that is the most attractive to them and at the same time allow the touristic place owner to improve and increase the visitor’s satisfaction. Sometimes, the amount of reviews can be overwhelming, discouraging intended visitors to manually read all the reviews to draw a conclusion. Relying only on star-rating of the touristic places can be misleading, as it lacks the context and details of the reviews. This is why we propose a semantic based recommender system that relies on natural language processing and big data analysis of the visitors’ reviews. The touristic places recommendation uses extracted keywords from data that is “cleaned”, together with sentiment analysis of the reviews, and context sensitive information such as geographic location, temporal selection and keywords translation.

Link-Analysis

Link-Analysis is a project to extract who, what, when, where, why, and how data from Wikipedia. Beyond extracting the data and getting it into a form for use, this project has a lot of interesting data processing issues, such as recognizing that the sentence ‘George Washington Carver was named after George Washington’ is discussing two different people. The data extract can be used for a wide number of purposes.

Devdas

Devdas (a Sanskrit word meaning Servant of the Gods) is intended to be an ‘Alexa’ on steroids. It is a platform of cooperating intelligent agents which can exploit current advances in computer science: deep learning, big data, vision, and even quantum computing. There are currently plans to incorporate at least 30 different agents in the platform, which leaves more than enough work to keep the students busy.

BioSimVis

BioSimVis is a vision processing program that is being developed to taking into account how a biological entity processing visual information. The actual process is far different than how computers currently address the problem. The computer tries to process an entire scene while the eye focuses on a smaller region to process (saccades). The digital image is recto-linear while the nerves on the back of the eye are arranged in phyllotactic structure. A second part of the program is to detect human emotion from image data. This project will be using the Woody Allen movie, Hannah and Her Sisters, as the training and testing dataset.

Rapid Homoglyph Prediction and Detection

As technology permeates the globe, it is becoming necessary to support an increasing number of languages, and hence character sets. Many character sets contain characters that look similar or identical to characters from other character sets (known as homoglyph characters). This poses a number of unique problems, such as the homoglyph URL attacks frequently used in phishing scams. The previously proposed solutions to these problems have mostly focused on restricting the characters available to users. This project proposes a new approach which can predict and detect homoglyphs with a high level of accuracy. This methodology simulates the human behavior of “visual scanning” and allows large amounts of visual data to be represented in a format that is easily and quickly searchable. Additionally, it employs a pre-processing time-memory trade-off to enable the detection and prediction of homoglyphs in fractions of a second.

Improving Text Mining with Enhanced Named-Entity Recognition

We are using a state-of-the-art parser to process natural language texts such as news articles. The parser uses a named-entity recognizer (NER) to identify phrases as names of people, organizations, and locations. DBpedia is a semantically organized database that is automatically created from the structured content of Wikipedia. In accordance with the principles of the Semantic Web, DBpedia has a large ontology that further categorizes people, organizations and locations. By accessing the latest archives of DBpedia, we are experimenting with matching the named entities found in the text with those categorized by DBpedia to obtain a finer-grained categorization. For example, this technique allows us to identify people as scientists or politicians, and organizations as companies or universities. Our goal is to use this information to improve the performance of text clustering and classification methods.

Urban Data Analysis

Data Analytics involves the study and application of algorithms and methodologies to discover insights from data. Specifically, our research activity is focused on the analysis of urban data, aimed at predicting crime patterns and user mobility behaviors. Such predictive models can be profitably used to improve resource furnishing and management of city resources, to anticipate criminal activity, to predict mobility patterns and avoid traffic congestion.

Information Security

Privacy Control of Social Networking.

Social media is a de facto standard in online communication. When users interact with others on a large scale, privacy plays an increasingly important part in maintaining safety and user confidence in a social media platform. Allowing end-users to control their interactions and data with others is a way to inspire end-user confidence. Many control schemes exist; however, they are flawed on two key points. These systems are somewhat confusing to the end users in that the users may not understand exactly what information is being shared with others and that the control schemes are hidden and abstract. In this project, we propose an idea with a prototype, named Solendar, that attempts to solve the issue with a special authorization approach. It has a pass system which allows end-users to explicitly specify which users are able to see and interact with their information on individual level. It has a group system that builds on the pass system, allowing end-users to organize other users into logical segments for effective information sharing while retaining the control of privacy.

Augmented/Virtual Reality

1995-2016 Barnegat Bay Algal Bloom VR Visualization