Opinion Taker. Opinion Maker.

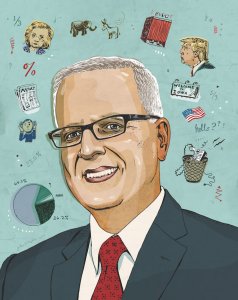

An interview with Patrick Murray, founding director of the Monmouth University Polling Institute.

Since its inception in 2005, the Monmouth University Polling Institute has been praised for its ability to accurately and consistently gauge the opinions of New Jersey residents. Nothing changed when the institute turned its attention to national polling last year. Its accuracy throughout the primaries helped earn the institute an A+ rating from FiveThirtyEight.com, and turned its founding director, Patrick Murray, into a go-to source for the national media. We caught up with Murray recently and asked him to opine about polling’s impact on the political process, this year’s historic presidential election, and more.

How did you get into polling?

I went to college with the idea I might go into engineering because I had a math mind. But my passion was really an interest in politics, so I ended up getting a political science degree…. It was when I went to graduate school for political science at Rutgers that I found I was consistently drawn to research that involved polling, about voting behavior and public opinion. One day, I walked across the street from the political science department to the Eagleton Institute, which had a poll, and just simply asked them if there was anything for a graduate student to do there. And that was the start of it, twenty-some years ago.

What’s changed in polling during that time?

Fewer people are picking up the phone. When I started out in this business, if you got a phone call, you picked it up. You wanted to know who was calling you. If we can get somebody to pick up the phone, the vast majority of them will do the interview. We’re just having trouble getting people to pick up the phone…. There’s also been a proliferation of polls. That’s good and bad. It’s bad because there’s more bad polls out there. But it’s good because you get a better understanding of what’s going on. You don’t have to rely on one poll.

Monmouth’s Polling Institute is rated as one of the best in the nation. Are your polls more accurate than most?

I’ll let others judge the accuracy of our polls. I will say that our approach is different in some ways, and the way we ask questions about policy issues is different than others, because we try to ask them in the way that people have conversations about these policy issues.

A lot of academics ask questions that an academic would be interested in but that might not interest the person on the street. My history has been to try to hear the questions in a way the typical person talks about the issue. In New Jersey, you go around; you eavesdrop on people in diners because that’s the place where people talk about politics. When [Monmouth] started doing national polling, I actually went out to Iowa and New Hampshire and sat down and talked to voters to find out what their issues were and, more importantly, the way they talked about those issues. The questions we asked were reflective of what the voters were concerned about and not just what the pundits were concerned about.

Do polls ever create news that isn’t there?

Sometimes we’ll get poll results where the media will proclaim with banner headlines that the majority of the public calls for the government to do X, whereas the public wasn’t really calling for this. It was just a pollster asked the question: should the government do X or Y, and since that was the way the question was phrased, people answering the polls said X. But if you had followed up and said, “How important is that to you? Or do you care?” you might have found the vast majority don’t care, or would have said, “I would prefer the government not do either X or Y.”

Very few reporters actually look at the way a question was asked, and there are many ways to affect the responses in a poll by artfully changing the way a question is asked. In most cases, with the legitimate public polls, it’s not done on purpose. But still, this goes back to what I was saying [about] why our approach is different: I really try to make sure that I’m hearing the question in the way that somebody sitting at their kitchen table is talking about an issue and not how a pundit is talking about it. Because that’s how you can get skewed questions, and if you get skewed questions, you can get skewed responses.

Some media outlets have suggested that political polling is broken. Is it?

No. There have been some misses with certain elections, but there are more hits than misses. If this were baseball, most pollsters would be in the Hall of Fame, in terms of their track records…. Polling is an imperfect tool. It’s designed to measure the here and now among everybody who you could potentially talk to. It’s not a perfect tool to determine how many people are actually going to participate in an election and how many are not, and what they’re going to do once they get there.

Are you saying that when a poll gets an election wrong, it’s not because polling is broken, it’s because people tell pollsters one thing and then do another?

It has nothing to do with whether the polls are broken or not. It has to do with the imperfect nature of polling to predict elections. A poll is literally a snapshot in time. People are very bad predictors of their own behavior, and in enough cases, that could throw off a poll by a few percentage points.

Beyond the margin of error?

Yes…. [Recently there have been a few] high-profile misses: a Kentucky election this past year, the Brexit vote in Britain, an Israeli election where they were off by enough that it raised questions about polling. I think a lot of it has to do with pollsters using the old-fashioned random digit dialing methodology, where you just start calling everybody and asking what they’re going to do, and our declining response rates affecting the ability to be consistently accurate.

Do we as a society give too much weight to these polls?

I think there’s a certain breathlessness with election polling, that each poll is met with banner headlines that overstate what the poll actually means. We’ve got to sit back, take a breath, and realize that this is one poll in one point in time. It may suggest a direction that things are going, but we know we are still a ways off from election and things could change.

What’s been the most surprising part of the current election cycle?

That Donald Trump has been able to defy every known political rule that we have. For example, before he announced he was running for president, he had very bad ratings among Republican voters. The majority of them had a negative view of him, and he was extremely well known. The rules of politics tell us that it’s very difficult for someone who is well known to change their ratings, unless they do something unusual: either something very good or something bad. What Donald Trump did that was unusual was announce that he was running for president and that he was going to build a wall. Suddenly, his favorability ratings among Republican voters do a 180 within a week of him making that announcement. We had never seen anything like that.

From then on, he’s broken every rule. Things that should have stuck to him did not, and he was doing things that we had not seen any other politician be able to do in terms of tracking his polling numbers. All of us have pretty much given up on predicting what kind of impact the next Donald Trump move will have on public opinion.

The Clinton side has been more predictable, although the level of anger and frustration in the electorate, the way it played out in the both parties, has been unusual. It didn’t look at first like Bernie Sanders was going to gain any traction. Last summer it looked like Clinton was going to be able to pull this out and comfortably win the nomination process. But something gelled with the Sanders voters, and he just started surging through the fall of 2015, and that helped make this a tighter race.

Did your polling predict any of that?

We saw the big shift from a huge Clinton lead in Iowa to what basically ended up being a razor-thin race. We had some misses, but we picked up some wins that nobody else picked up, such as Sanders’ win in Oklahoma. So I think, overall, we had a pretty good track record.

Does polling help the political process?

It can when used properly. While the headlines and all the cable news graphics focus on the horse race question—who’s ahead and who’s behind—every pollster is asking questions about why the electorate feels the way it does, what causes the mood of the country, and the implications that can have on the ability to govern down the line. Unfortunately, those questions aren’t getting as much attention as the snapshot of who’s ahead [and] who’s behind today—and yet that can change in 24 hours. The underlying mood is not going to change, and that’s giving good information to policymakers about the hurdles they face in the future. But unless they pay closer attention to it, and take it seriously, they’re going to continue to face this problem.